Apache Pig | Live Big Data Scripting & Data Flow Course

Master Apache Pig — Instructor-led Live Online Training for Big Data Engineers

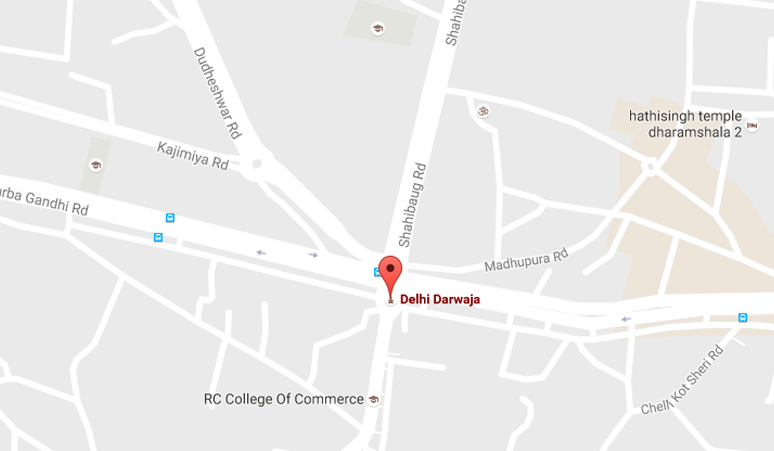

Apache Pig Training by Laliwala IT is designed for data engineers, big data developers, and analytics professionals who want to master Pig Latin scripting for data transformation on Hadoop. Based in Ahmedabad, Gujarat, India, we deliver live, interactive, project-based training covering Pig Latin, data flow operations, UDF development, optimization techniques, and integration with the Hadoop ecosystem.

Our online Apache Pig course features real-time instructor-led classes, hands-on coding labs, flexible schedules, and career guidance. Whether you're a beginner or experienced professional, this training will turn you into a skilled Pig developer ready for big data projects.

Course Modules — Comprehensive Apache Pig Training (4-5 Weeks | 35+ Hours)

- Module 1: Introduction to Apache Pig & Hadoop – Pig vs MapReduce vs Hive, Pig architecture, execution modes (Local & MapReduce), installation & setup

- Module 2: Pig Latin Basics – Data types, field definitions, loading/storing data, simple transformations, FOREACH, FILTER, LIMIT, ORDER

- Module 3: Grouping & Joins – GROUP operator, COGROUP, JOIN types (inner, outer, self), CROSS, nested operations

- Module 4: Complex Data Types – Tuples, bags, maps, nested structures, flattening, operating on complex data

- Module 5: Built-in Functions & Macros – String functions, math functions, eval functions, bag/tuple functions, creating macros for reusability

- Module 6: User Defined Functions (UDFs) – Writing UDFs in Java/Python, Eval UDFs, Filter UDFs, Load/Store UDFs, UDF registration & usage

- Module 7: Pig Advanced Operations – SPLIT, STREAM, SAMPLE, PARALLEL, DISTINCT, UNION, debugging Pig scripts

- Module 8: Optimization & Performance Tuning – MapReduce job optimization, combiner usage, data locality, compression, parallelism tuning, explain plan analysis

- Module 9: Pig Integration with Hadoop Ecosystem – Pig with HDFS, HBase, Avro, Parquet, SequenceFile, integration with HCatalog & Hive

- Module 10: Pig in Production – Running Pig scripts via Grunt shell, Pig Server, Pig with Oozie workflow, scheduling, error handling, logging

- Module 11: Real-world Use Cases – Log analysis, ETL pipelines, data cleansing, clickstream analysis, recommendation systems preparation

- Module 12: Capstone Project – Build an end-to-end data processing pipeline using Pig for a real business scenario

What's Included in Apache Pig Training?

- Live Instructor-led classes (real-time Q&A, script walkthroughs, debugging sessions)

- Recorded sessions for revision anytime

- Hands-on coding labs on Hadoop clusters

- Study materials (PDFs, Pig scripts, UDF code samples)

- Certificate of completion (recognized by industry partners)

- Placement assistance – resume & interview prep, big data role guidance

- Lifetime access to course updates and student community

Detailed Curriculum Highlights

Week 1-2: Pig Latin Fundamentals & Data Transformations

- HDFS architecture overview, MapReduce basics for context

- Pig installation, configuring Hadoop for Pig, Grunt shell commands

- Loading data from HDFS/Local: LOAD, USING, PigStorage, TextLoader, JsonLoader

- Schema definition: specifying data types, handling missing values

- Basic transformations: FOREACH with expressions, alias assignment

- Filtering data: FILTER with complex conditions, Boolean operators

- Sorting and limiting: ORDER BY, LIMIT, ascending/descending

- GROUP operator: grouping by single/multiple keys, understanding nested bags

Week 3-4: Joins, Complex Data & UDF Development

- JOIN types: inner join, left/right/full outer join, joining multiple datasets

- COGROUP for analyzing grouped data from multiple relations

- CROSS product, UNION, DISTINCT operations

- Working with complex data: nested bags, flattening, map lookups

- Built-in functions: CONCAT, SUBSTRING, UPPER, LOWER, REGEX_EXTRACT, TOKENIZE

- Creating macros for reusable Pig logic

- Writing Java UDFs: extending EvalFunc, FilterFunc, implementing exec() method

- Registering UDFs, using UDFs in Pig Latin scripts

Week 5-6: Optimization, Integration & Capstone Project

- Pig optimization techniques: using PARALLEL, combining small files, compression

- EXPLAIN plan: understanding logical/physical/execution plans

- ILLUSTRATE for debugging and testing Pig scripts

- Integration with HCatalog: accessing Hive tables, schema management

- Pig with HBase: reading/writing HBase tables via HBaseStorage

- Avro & Parquet support: efficient columnar storage integration

- Pig with Oozie: scheduling Pig workflows, coordinating jobs

- Capstone Project: build a complete ETL pipeline for clickstream log analysis

Real-World Projects & Use Cases

- Web server log analysis: parsing, filtering, aggregating traffic metrics

- Data cleansing pipeline: handling missing values, standardizing formats

- Customer purchase history analysis: joins, grouping, ranking

- Sensor data processing: time-series aggregation, anomaly detection preparation

- Social media data analysis: sentiment preprocessing, user engagement metrics

- Retail data ETL: product sales aggregation, inventory reporting

- Project: Build a recommendation data preparation pipeline

Why Choose Laliwala IT for Apache Pig Online Training?

- Industry Expert Trainers: 10+ years of big data & Hadoop experience

- Live Cluster Access: Practice on real distributed Hadoop clusters

- Flexible Batches: Weekday & weekend options, recorded backup

- Small Batch Size: Max 10-12 students for personalized attention

- Affordable Fees: High-quality training from Ahmedabad tech hub

- Job Assistance: Tie-ups with big data companies & placement support

- Certification: ISO & Govt recognized certificate after completion

- 24/7 Lab Access: Online Hadoop clusters & learning management system

- Global Recognition: Trained students from India, USA, UK, Canada, UAE

- Post-training Support: Doubt clearing via forum & email for 6 months

Tools & Technologies Covered

- Apache Pig 0.17+ (latest), Pig Latin, Grunt shell

- Hadoop Ecosystem: HDFS, MapReduce, YARN

- Integration: HBase, HCatalog, Hive, Avro, Parquet, ORC

- Programming: Java (UDFs), Python (Streaming UDFs), Bash

- Scheduling: Apache Oozie, cron jobs

- Build Tools: Maven for UDF development, Eclipse/IntelliJ IDEA

- Cloudera/Hortonworks sandbox for practice environment

Who Should Join?

- Data Engineers & Big Data Developers

- ETL Developers working on Hadoop platforms

- Data Analysts wanting to scale data processing

- Software Engineers transitioning to big data

- College students aiming for data engineering careers

- Working professionals wanting Pig specialization

- Hadoop administrators learning data processing pipelines

Online Training

WordPress Development

Liferay System Administration

Liferay Theme Development

Liferay Portal Administrator

Liferay Development

Liferay Training Course

Apache Hive

Apache Pig

Apache Solr

Apache Cassandra

Apache CMIS

Apache Hadoop

Apache ActiveMQ

Advanced Apache Mahout

Apache Camel

Apache Maven

Apache Nutch

Apache Mahout

Magento

AWS Cloud Computing

Alfresco Share Configuration

Alfresco

Alfresco Activiti

Moodle

Drupal

Joomla

Advanced Activiti BPM

JBoss jBPM

Git

Puppet

Mule ESB

Apache CXF

Apache HBase

OpenStack Cloud Computing

Cloud Security

Automation Testing

Apache HTTP Server Administration

Development Services

© 2025 Laliwala IT. All rights reserved.