Apache Hadoop Corporate Training

Enterprise Big Data & Hadoop Ecosystem Training for Teams

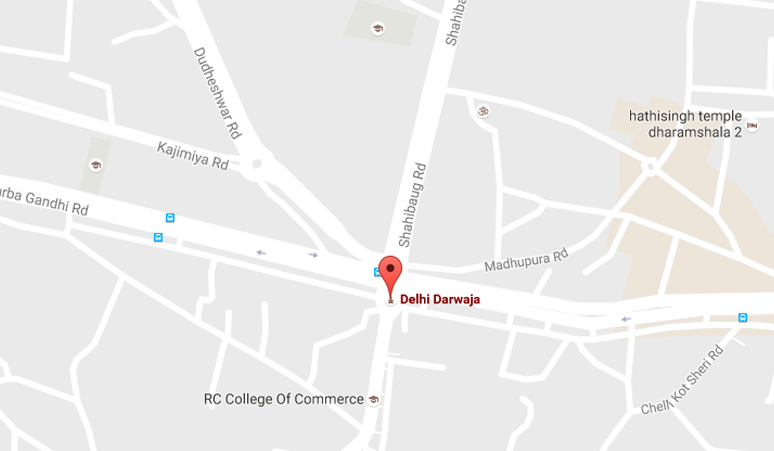

Apache Hadoop Corporate Training delivered by Laliwala IT's senior team of experienced Big Data specialists. Based in Ahmedabad, Gujarat, India, we provide powerful training sessions for enterprises looking to upskill their teams on Apache Hadoop ecosystem — the leading open-source framework for distributed storage and processing of large datasets.

Apache Hadoop is the foundation of modern Big Data architectures, enabling organizations to store, process, and analyze massive amounts of data across clusters of computers. It is widely used by enterprises for data warehousing, log processing, recommendation systems, fraud detection, and advanced analytics. Our corporate training programs are tailored to your team's skill level and business needs — available in on-site, online, or hybrid formats.

Corporate Training Programs We Offer

- Hadoop Fundamentals Training – Hadoop architecture, HDFS, YARN, MapReduce programming, cluster setup

- HDFS Administration Training – HDFS architecture, NameNode, DataNode, High Availability, Federation, Snapshots

- MapReduce Development Training – Mapper, Reducer, Combiner, Partitioner, InputFormat, OutputFormat, Counters

- Apache Hive Training – HiveQL, partitioning, bucketing, UDFs, optimization, HCatalog, Hive on Tez/Spark

- Apache HBase Training – NoSQL database, column-family storage, schema design, CRUD operations, coprocessors

- Apache Pig Training – Pig Latin, data flows, UDFs, PiggyBank, optimization for ETL pipelines

- Apache Sqoop & Flume Training – Data ingestion from RDBMS to Hadoop, log collection, streaming data

- Apache Oozie & ZooKeeper Training – Workflow scheduling, coordinator jobs, distributed coordination

- Apache Spark with Hadoop Training – Spark Core, Spark SQL, Spark Streaming, MLlib, GraphX integration

- Hadoop Security & Kerberos Training – Authentication, authorization, encryption, Apache Ranger, Knox

Corporate Training Benefits

- Customized Curriculum – Training tailored to your specific Big Data implementation and business requirements

- Real-World Project Experience – Trainers actively work on Hadoop projects with real enterprise use cases

- Flexible Scheduling – Training delivered according to your team's availability and timelines

- Hands-On Labs – 60% practical labs, 40% theory with real-world Big Data scenarios

- Post-Training Support – 30 days email/chat support after training completion

- Certification Preparation – Preparation for Cloudera Certified Associate (CCA) and Cloudera Certified Professional (CCP) exams

- Training Materials – Comprehensive courseware, lab exercises, sample code, presentations, and documentation

Course Curriculum - Comprehensive Hadoop Training (4-5 Days)

Day 1: Hadoop Fundamentals & HDFS Architecture

- Introduction to Big Data & Hadoop Ecosystem

- Hadoop Architecture: HDFS, YARN, MapReduce

- HDFS Architecture: NameNode, DataNode, Secondary NameNode

- HDFS Read/Write Pipeline & Data Replication

- Hadoop Cluster Setup: Single-Node & Multi-Node Cluster

- HDFS Commands: File operations, Permissions, Quotas, Snapshots

- YARN Architecture: ResourceManager, NodeManager, ApplicationMaster

- YARN Schedulers: FIFO, Capacity, Fair Scheduler

- MapReduce Fundamentals: Mapper, Reducer, Driver Class

- MapReduce Data Types: Writable, WritableComparable

- InputFormats: TextInputFormat, KeyValueInputFormat, SequenceFileInputFormat

- OutputFormats: TextOutputFormat, SequenceFileOutputFormat

- Hadoop Configuration Files: core-site.xml, hdfs-site.xml, mapred-site.xml, yarn-site.xml

Day 2: Advanced MapReduce & Hadoop Development

- Advanced MapReduce: Combiner, Partitioner, Distributed Cache

- Custom Writable & WritableComparable Implementation

- MapReduce Counters: Built-in & Custom Counters

- MapReduce Join Operations: Map-Side Join, Reduce-Side Join

- Secondary Sorting & Total Order Sorting

- Data Compression in Hadoop: Gzip, Snappy, LZO, Bzip2

- Sequence Files & Avro Files for Efficient Storage

- Hadoop Streaming: Writing MapReduce Jobs in Python/Ruby

- Testing MapReduce Jobs: MRUnit, LocalJobRunner

- Debugging & Logging in Hadoop

- Performance Tuning: Configuration Parameters, Speculative Execution

Day 3: Hadoop Ecosystem - Hive, Pig, HBase

- Apache Hive: Data Warehousing on Hadoop

- Hive Architecture: Metastore, HiveQL, Execution Engines (MapReduce, Tez, Spark)

- Hive Tables: Managed vs External Tables, Partitioning, Bucketing

- HiveQL: DDL, DML, Joins, Subqueries, Windowing Functions

- User-Defined Functions (UDF, UDAF, UDTF) in Hive

- Hive Optimization: ORC/Parquet Formats, Vectorization, Cost-Based Optimizer

- Apache Pig: Data Flow Language for ETL

- Pig Latin: LOAD, FOREACH, FILTER, GROUP, JOIN, ORDER, LIMIT

- Pig UDFs: Java & Python UDFs, PiggyBank Library

- Apache HBase: NoSQL Database on HDFS

- HBase Architecture: HMaster, RegionServer, ZooKeeper Coordination

- HBase Schema Design: Row Key Design, Column Families, Versions

- HBase CRUD Operations: Put, Get, Scan, Delete, Increment

- HBase Filters, Coprocessors, Bulk Load

Day 4: Data Ingestion, Orchestration & Production Deployment

- Apache Sqoop: Data Import/Export between RDBMS and Hadoop

- Sqoop Incremental Import: Append, LastModified

- Sqoop Evaluation: --query, --columns, --where, --boundary-query

- Apache Flume: Log Collection & Streaming Data Ingestion

- Flume Architecture: Sources, Channels, Sinks, Interceptors

- Apache Oozie: Workflow & Coordinator Jobs

- Oozie Actions: MapReduce, Hive, Pig, Sqoop, Shell, Java, Email

- Apache ZooKeeper: Distributed Coordination Service

- ZooKeeper Use Cases: Leader Election, Configuration Management, Distributed Locks

- Hadoop Cluster Security: Kerberos Authentication

- Apache Ranger: Centralized Security Administration

- Apache Knox: Gateway for Hadoop Clusters

- Hadoop Cluster Monitoring: Ambari, Cloudera Manager

- Hadoop Backup & Disaster Recovery

- Hadoop on Cloud: EMR (AWS), Dataproc (GCP), HDInsight (Azure)

Who Should Attend?

- Data Engineers & Big Data Developers

- Database Administrators transitioning to Big Data

- Data Architects designing Big Data solutions

- DevOps Engineers managing Hadoop clusters

- ETL Developers & Data Warehouse Professionals

- Data Scientists working with large datasets

- Technical Leads & Project Managers

- System Administrators managing Hadoop infrastructure

Why Choose Laliwala IT for Apache Hadoop Corporate Training?

- 7+ Years of Big Data Expertise – Deep experience with Hadoop ecosystem

- Real-World Project Experience – Trainers actively work on Hadoop projects

- Customized Training Materials – Comprehensive courseware, lab exercises, and sample code

- Flexible Scheduling – Training delivered according to your team's availability

- Batch Sizes – Ideal batch size of 5-15 participants for maximum engagement

- Post-Training Support – 30 days email/chat support after training completion

- Hands-On Labs – Real-time practical exercises on multi-node Hadoop clusters

- Ecosystem Coverage – Training on 10+ Hadoop ecosystem components

- Certification Preparation – CCA SPSS, CCP Data Engineer exam preparation

- Global Delivery – Trusted by enterprises across India, USA, UK, Canada, Australia, UAE, Europe

Hadoop Ecosystem Versions Covered

- Apache Hadoop 3.x (Latest)

- Apache Hadoop 2.x (Legacy/Production)

- Cloudera Distribution (CDH) 7.x

- Hortonworks Data Platform (HDP) 3.x

- Apache Hive 3.x, Apache HBase 2.x, Apache Pig 0.17+

- Apache Spark 3.x with Hadoop

Corporate Training

Liferay Corporate Training

Alfresco Corporate Training

Apache Camel Corporate Training

Apache Hadoop Corporate Training

AWS Corporate Training

SOA and BPM Corporate Training

Apache Solr Corporate Training

Magento Corporate Training

Git Corporate Training

Continuous Delivery Training

Symfony Corporate Training

Drupal Corporate Training

Mule ESB Corporate Training

Online Training

Liferay Training

Alfresco Training

Magento Training

AWS Training

Drupal Training

Mule ESB Training

WordPress Training

Development

© 2016 Laliwala IT. All rights reserved.