Apache Hadoop Training | Master Big Data Processing & Ecosystem

Become a Big Data Expert with Apache Hadoop — Instructor-led Live Online Sessions

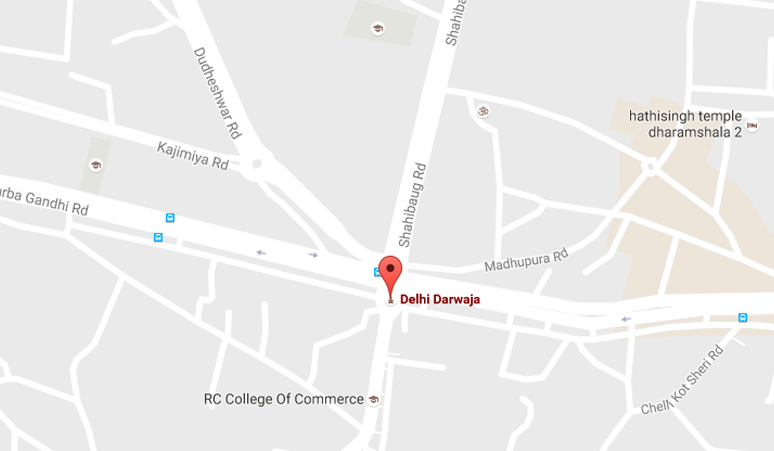

Apache Hadoop Training by Laliwala IT is designed for data engineers, developers, and IT professionals who want to master the industry's leading big data platform. Based in Ahmedabad, Gujarat, India, we deliver live, interactive, project-based training covering everything from HDFS and MapReduce to YARN, cluster setup, and the complete Hadoop ecosystem.

Our online Apache Hadoop course features real-time instructor-led classes, hands-on projects, flexible schedules, and career guidance. Whether you're a beginner or looking to upgrade your big data skills, this training will turn you into a job-ready Hadoop professional.

Course Modules — Comprehensive Apache Hadoop Training (6-7 Weeks | 45+ Hours)

- Module 1: Big Data & Hadoop Fundamentals – What is Big Data? 5Vs, Hadoop history, architecture, ecosystem overview, use cases

- Module 2: HDFS (Hadoop Distributed File System) – HDFS architecture, NameNode, DataNode, block storage, replication, read/write pipeline

- Module 3: HDFS Operations & Commands – HDFS shell commands, file permissions, snapshots, quotas, balancer, data recovery

- Module 4: MapReduce Framework – MapReduce paradigm, Mapper, Reducer, Combiner, Partitioners, Shuffle & Sort phase

- Module 5: MapReduce Programming (Java) – Writing MR jobs, InputFormats, OutputFormats, Counters, custom data types, Writable

- Module 6: YARN (Yet Another Resource Negotiator) – ResourceManager, NodeManager, ApplicationMaster, Schedulers (FIFO, Capacity, Fair)

- Module 7: Hadoop Cluster Setup – Single node, pseudo-distributed, fully distributed cluster, configuration files, AWS EMR setup

- Module 8: Hadoop Ecosystem Tools – Hive, Pig, HBase, Sqoop, Flume, Oozie, ZooKeeper, Hue – introduction & integration

- Module 9: Data Ingestion (Sqoop & Flume) – Import/export between RDBMS and HDFS, incremental imports, Flume agents, sources, sinks

- Module 10: Workflow & Orchestration – Oozie workflows, coordinators, bundles, scheduling MapReduce, Hive, Pig jobs

- Module 11: Hadoop Security & High Availability – Kerberos authentication, ACLs, HDFS HA (QJM, NFS), ResourceManager HA

- Module 12: Real-World Capstone Project – Build end-to-end batch processing pipeline for log analytics or clickstream data

What's Included in Apache Hadoop Training?

- Live Instructor-led classes (real-time Q&A, screen sharing, doubt clearing)

- Recorded sessions for revision anytime

- Hands-on assignments & industry-level big data projects

- Study materials (PDFs, MapReduce code examples, cluster configs)

- Certificate of completion (recognized by industry partners)

- Placement assistance – resume & interview prep, freelance guidance

- Lifetime access to course updates and student community

Detailed Curriculum Highlights

Week 1-2: HDFS & MapReduce Core

- Introduction to distributed computing and Hadoop design principles

- HDFS architecture deep dive: block placement, rack awareness, heartbeats

- Hands-on HDFS commands: put, get, copyToLocal, cat, du, dfsadmin

- Understanding NameNode metadata (fsimage, edits log) & Secondary NameNode

- MapReduce data flow: input splits, mapping, shuffling, sorting, reducing

- Writing custom MapReduce jobs for word count, log analysis, join operations

- Using Counters for job metrics and debugging

- Custom Writable and WritableComparable implementations

Week 3-4: YARN, Cluster Setup & Ecosystem

- YARN architecture: ResourceManager, NodeManager, ApplicationMaster lifecycle

- Configuring CapacityScheduler and FairScheduler for multi-tenancy

- Setting up Hadoop cluster on AWS EMR / Google Dataproc / Local VM

- Ecosystem introduction: Hive (warehousing), Pig (data flow), HBase (NoSQL)

- Using Sqoop to import/export data between MySQL/Oracle and HDFS

- Flume for streaming log collection from sources to HDFS

- ZooKeeper for distributed coordination and configuration management

- Hue web interface for browsing HDFS, Hive queries, job management

Week 5-6: Advanced Operations, Security & Capstone

- Oozie workflows: creating job DAGs for complex ETL pipelines

- HDFS High Availability (HA) with Quorum Journal Manager (QJM)

- ResourceManager HA setup and failover configuration

- Kerberos authentication for secure Hadoop clusters

- HDFS encryption at rest and data masking

- Performance tuning: MapReduce parameters, speculative execution, JVM reuse

- Monitoring tools: Hadoop Admin UI, YARN ResourceManager UI, Ganglia, Ambari

- Capstone: Build complete batch processing pipeline for e-commerce clickstream analytics

Tools & Technologies Covered

- Apache Hadoop 3.x, HDFS, MapReduce, YARN

- Languages: Java (primary), Python (streaming), Shell scripting

- Ecosystem: Hive, Pig, HBase, Sqoop, Flume, Oozie, ZooKeeper, Hue

- Cluster: AWS EMR, Google Dataproc, Cloudera CDH, Hortonworks HDP

- Monitoring: Ambari, Ganglia, Prometheus, Grafana

- Orchestration: Apache Oozie, Apache Airflow (overview)

Why Choose Laliwala IT for Apache Hadoop Online Training?

- Industry Expert Trainers: 10+ years of Big Data & Hadoop experience

- Live Project Experience: Build at least 3 real-world big data pipelines + final portfolio

- Flexible Batches: Weekday & weekend options, recorded backup for missed classes

- Small Batch Size: Max 10-12 students for personalized attention

- Affordable Fees: High-quality training at competitive rates from Ahmedabad hub

- Job Assistance: Regular tie-ups with IT companies & placement cell

- Certification: ISO & Govt recognized certificate after successful completion

- 24/7 Lab Access: Online Hadoop clusters & learning management system

- Global Recognition: Trained students from India, USA, UK, Canada, Australia, UAE

- Post-training Support: Doubt clearing via dedicated forum & email for 6 months

Who Should Join?

- Data engineers & developers wanting to start Big Data career

- Java/Python professionals moving to Hadoop ecosystem

- Database administrators exploring distributed storage solutions

- System administrators managing Hadoop clusters

- Data scientists requiring big data processing skills

- College students seeking job-ready Hadoop skills

- Working professionals aiming for Cloudera/Hortonworks certification

Online Training

WordPress Development

Liferay System Administration

Liferay Theme Development

Liferay Portal Administrator

Liferay Development

Liferay Training Course

Apache Hive

Apache Pig

Apache Solr

Apache Cassandra

Apache CMIS

Apache Hadoop

Apache ActiveMQ

Advanced Apache Mahout

Apache Camel

Apache Maven

Apache Nutch

Apache Mahout

Magento

AWS Cloud Computing

Alfresco Share Configuration

Alfresco

Alfresco Activiti

Moodle

Drupal

Joomla

Advanced Activiti BPM

JBoss jBPM

Git

Puppet

Mule ESB

Apache CXF

Apache HBase

OpenStack Cloud Computing

Cloud Security

Automation Testing

Apache HTTP Server Administration

Development Services

© 2025 Laliwala IT. All rights reserved.